The Salute AI team attended NVIDIA GTC 2026 this year, and as with last year’s event, it was one of the most thought-provoking and energizing experiences the industry has to offer. If GTC 2025 was the moment the industry came to terms with the sheer scale of what agentic AI would demand, GTC 2026 was the moment it started to reckon with what actually delivering that at scale requires. The conversations have matured, the numbers have gotten larger, and the operational challenges have come into sharper focus.

From ambition to architecture

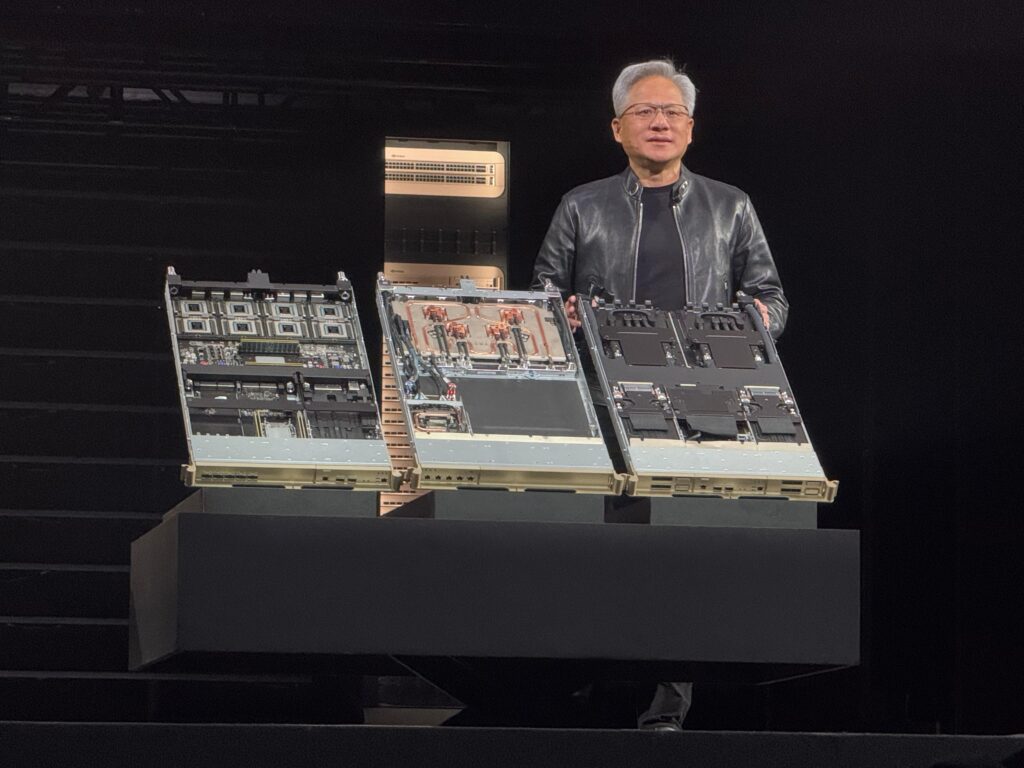

At GTC 2025, Jensen Huang traced the arc from perception to generative to agentic AI, making the case that each chapter multiplied compute requirements by an order of magnitude. The Blackwell platform was unveiled as the hardware foundation for this new era, with rack power densities moving from 130kW toward projections of 200kW and beyond. The message was clear: AI infrastructure was no longer a generic compute environment. It was an AI factory, purpose-built to produce intelligence at scale.

GTC 2026 took that framing and pushed it further. The new compute architecture delivers 35x better performance per watt compared to previous generations. On paper that sounds like relief. In practice, efficiency gains at the chip level do not reduce total power consumption when demand is growing as fast as it is. Every watt saved per operation gets immediately reinvested into more operations, more tokens, more inference capacity. The AI factory model has not become less power hungry. It has become more capable of consuming power productively.

The 100x number is real, and it matters

When you combine hardware efficiency gains with the scale of deployment being planned over the next three to five years, the industry is tracking toward something in the order of 100x more AI compute capacity than exists today. Not 100% more. 100 times more. That figure is not a projection from an analyst deck. It follows from the hardware roadmap, announced deployment plans, and the accelerating share of AI within total internet capacity. GTC 2026 confirmed the direction of travel in a way that leaves little room for skepticism.

What GTC 2025 framed as a coming transformation, GTC 2026 is actively building. The question for operators and infrastructure providers is no longer whether this demand is real. It is whether their operational models are ready to meet it.

Optical interconnects and the limits of decentralization

One of the more significant technical threads at GTC 2026 was the push toward optical interconnects, bringing high-bandwidth connectivity closer to the compute layer and enabling more flexible, partially distributed architectures. This builds on the networking philosophy introduced at GTC 2025 with technologies like NVLink and SpectrumX Ethernet, which were designed to minimize latency within the rack before thinking about scale-out.

The direction of travel is consistent: scale up before you scale out, then use connectivity to extend reach. Optical networks accelerate that second step, making regional inference factories and edge deployments more viable. But they do not eliminate the need for centralized infrastructure. Training workloads will remain highly centralized for the foreseeable future. The synchronization and latency constraints of large-scale model training do not disappear because the interconnects get faster. What changes is the architecture around inference, which becomes more distributed, more tiered, and more geographically flexible.

The infrastructure is tiered, not flat

What both GTC 2025 and GTC 2026 point toward, taken together, is a world of increasingly specialized infrastructure layers rather than a single homogeneous compute environment. Large centralized training clusters will continue to operate at gigawatt scale. Regional inference factories will handle production workloads at the tens of megawatts scale, closer to end users. Edge inference will extend capability into latency-sensitive or connectivity-constrained environments.

This tiered structure means that power and connectivity are now equally critical constraints, not competing ones. The infrastructural challenge is not moving from power to connectivity. It is expanding to include both simultaneously, at every layer of the stack.

What this means for Salute and the operators we support

Last year, Salute came away from GTC with a clear conviction: that the transition to direct-to-chip liquid cooling at scale was not optional, and that operational readiness, encompassing commissioning, facilities management, and lifecycle support, would determine which operators successfully protected their GPU investments and which did not. That conviction has only deepened.

GTC 2026 reinforces that the complexity of what is being built is intensifying, not stabilizing. Rack densities are continuing to climb. Power and cooling requirements are becoming more exacting. The margin for operational error is shrinking as infrastructure becomes more sensitive and more expensive. The organizations that will be best positioned are the ones that treat infrastructure not as a commodity procurement exercise but as a strategic capability requiring specialist expertise across the full lifecycle.

That is precisely what Salute is built to deliver. Through advisory, design, commissioning, sustainability, and facilities management services tailored to the demands of high-density AI infrastructure, we are helping operators navigate this complexity with confidence. GTC 2026 was an invaluable learning experience, and a reaffirmation of why the work Salute does matters. We left energized, informed, and more committed than ever to helping our clients build infrastructure that is ready for what comes next.

Discover a smarter way to scale your AI infrastructure with Salute

As the AI era accelerates, Salute stands at the forefront of delivering the physical infrastructure that will power it. With a global footprint spanning 12 offices, 1,800+ employees, and operations in over 102 markets—adding up to support for 80% of the world’s data center operators—we bring trusted expertise across the full lifecycle. Whether scaling an AI factory, upgrading for dense rack requirements, or deploying next-gen cooling systems, Salute delivers the insight, execution, and operational excellence to scale safely and efficiently.